I/O workloads acceleration at NSCC with IME

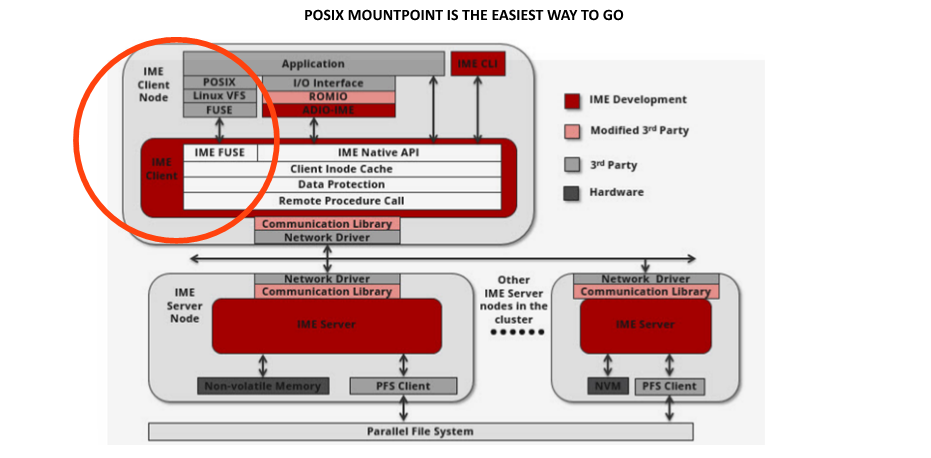

FAST ACCESS: JUST USE MOUNTPOINT /ime

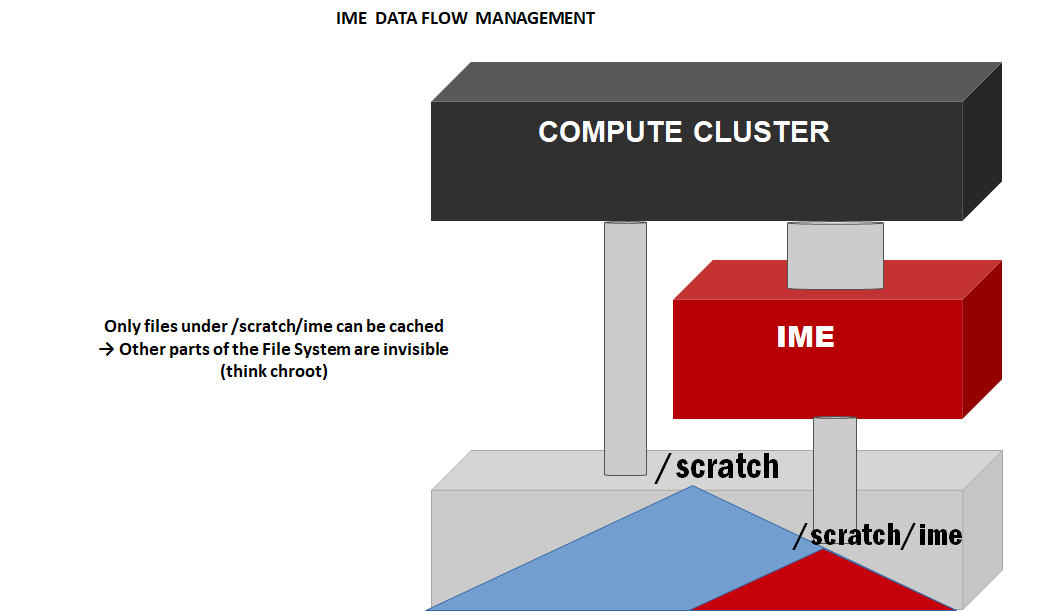

IME is mounted on all compute node: just store your files in /ime

[ddnsupport@mon02 IO500]$ df -h

Filesystem Size Used Avail Use% Mounted on

/dev/sda3 273G 216G 44G 84% /

tmpfs 63G 136K 63G 1% /dev/shm

/dev/sda 1488M 33M 431M 7% /boot

192.168.156.29@o2ib,192.168.156.30@o2ib:/scratch 2.8P 2.2P 664T 77% /scratch

192.168.156.29@o2ib,192.168.156.30@o2ib:/seq 1.2P 892T 240T 79% /seq

192.168.160.104:/home/ 3.4P 2.7P 775T 78% /home

192.168.160.101:/data/ 5.3P 3.9P 1.4P 74% /data

imefs 2.8P 2.2P 664T 77% /ime

%ls /ime/users

academy adm astar create gov hackathon industry ntu nus smu sutd

FAST ACCESS: examples

(Example only, change to relevant directory as per your home directory path. For example if your home directory is /home/users/nscc/user1 your relative path in ime willl be /ime/users/nscc/user1)

% cd /ime/users/industry

% my_big_application >result.out

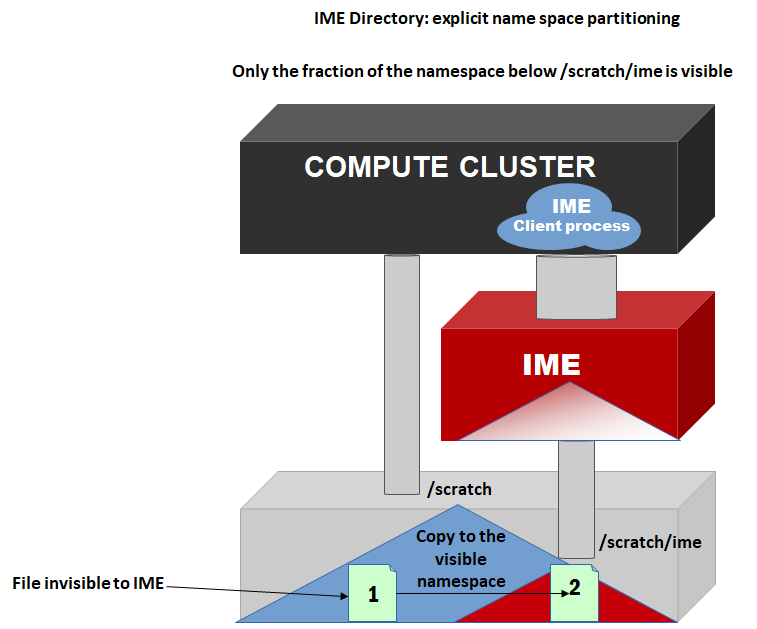

Or change the file name in your code / configurationfile from /scratch/users/industry/<my_dir>

to

/ime/users/industry/<my_dir>

From to 2 to 5 GB/s per clientnode

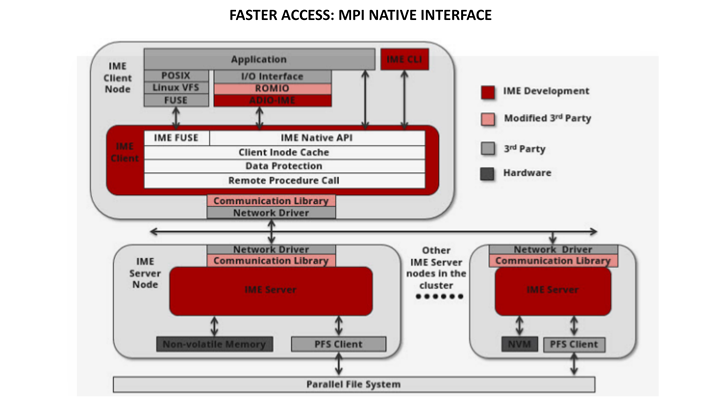

FASTER ACCESS: USE DDN MPI

% MPI_DDN_ROOT=/opt/ddn/mvapich

% export LD_LIBRARY_PATH=$MPI_DDN_ROOT/lib64

% export LD_LIBRARY_PATH+=:/usr/local/lib

% mpirun -genv MV2_NUM_HCAS 1 -genvMV2_CPU_BINDING_LEVEL core -genv MV2_CPU_BINDING_POLICY scatter -ppn 12/home/adm/sup/ddnsupport/jacquaviva/mdtest -r -t -F -w 3901 -e 3901 -d /ime/ddnsupport/IO500/datafiles/mdt_hard -n 5000

# Up to 10 GB/s per client node

Better integration: native support of IME in major MPI distros

-

Patch merged inMaster tree of OpenMPI

- Expected to be part of 3.1.1 release

-

Patch merged in Master tree of Mvapich

- Expected to be part of v3.3b3 release

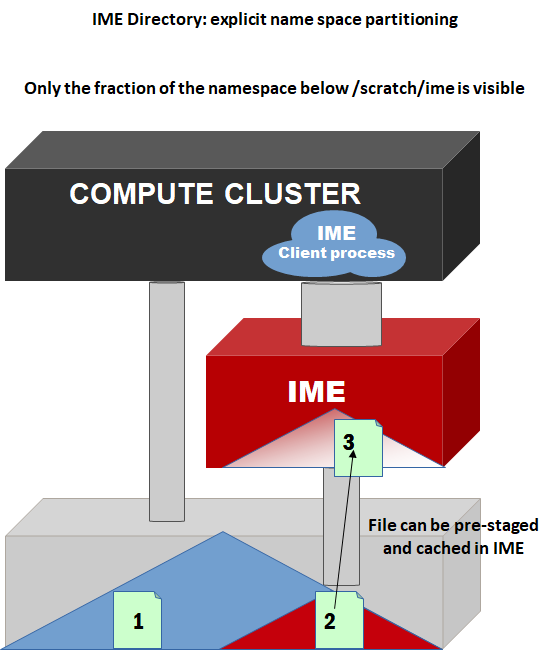

Integration with PBS: pre-staging data

#!/bin/sh

#PBS-l walltime=3:30:00

#PBS-l select=32:ncpus=24:mpiprocs=24

#PBS-N io500_large

#PBS-o io500_large.o

#PBS-e io500_large.err

MPI_DDN_ROOT=/opt/ddn/mvapich

exportLD_LIBRARY_PATH=$MPI_DDN_ROOT/lib64

exportLD_LIBRARY_PATH+=:/usr/local/lib

/opt/ddn/ime/bin/ime-prestage -bime:///scratch/ime/<my_dir>/<my_file>

/home/adm/sup/ddnsupport/jacquaviva/IO500/io-500-dev/io500.sh

echo"qstat -f ${PBS_JOBID}"

echo" "

qstat-f ${PBS_JOBID}

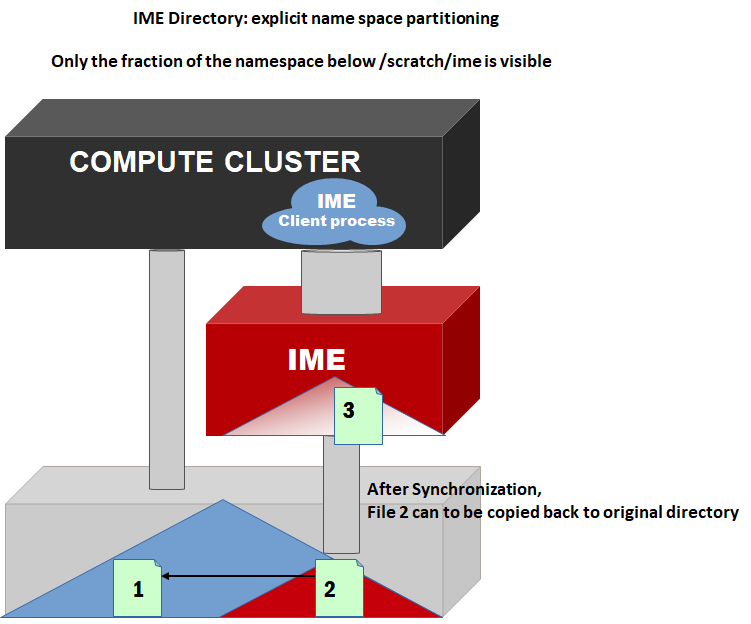

Integration with PBS: Staging-out

#!/bin/sh

#PBS -l walltime=3:30:00

#PBS -lselect=32:ncpus=24:mpiprocs=24

#PBS -N io500_large

#PBS -o io500_large.o

#PBS -e io500_large.err

MPI_DDN_ROOT=/opt/ddn/mvapich

exportLD_LIBRARY_PATH=$MPI_DDN_ROOT/lib64

export LD_LIBRARY_PATH+=:/usr/local/lib

/opt/ddn/ime/bin/ime-prestage-b ime:///scratch/ime/<my_dir>/<my_file>

/home/adm/sup/ddnsupport/jacquaviva/IO500/io-500-dev/io500.sh

/opt/ddn/ime/bin/ime-sync-b ime:///scratch/ime/<my_dir>/<my_file>

cp/scratch/ime/<my_dir>/<my_file> /home/<my_dir>/result

echo "qstat -f${PBS_JOBID}"

echo " "

qstat -f ${PBS_JOBID}

Template PBS job scripts

Example PBS job scripts can also be found on the system in the directory:

/app/examples/ime

End of Document

Comments are closed.